Blog

May 8, 2026

I asked ChatGPT why companies come to Solid. Here’s what it found

Yoni Leitersdorf

CEO & Co-Founder

A qualitative analysis of our last two months of customer and prospect conversations shows a clear shift: enterprises are no longer asking whether AI can help with data. They want to know HOW to do it

Last week, I did a very startup-y thing.

I took a bunch of our recent customer and prospect interactions, anonymized the sensitive stuff, and asked ChatGPT a simple question:

Why are organizations coming to Solid right now?

Not what do we think they want. Not what our pitch says they should want. Not what the analyst reports say the market wants.

What are they actually trying to solve?

This is not a scientific survey. It’s not a Gartner Magic Quadrant. It’s not statistically significant. It’s a qualitative analysis of the last couple of months of conversations, pilot plans, solution proposals, and meeting summaries.

Still, the pattern was surprisingly clear.

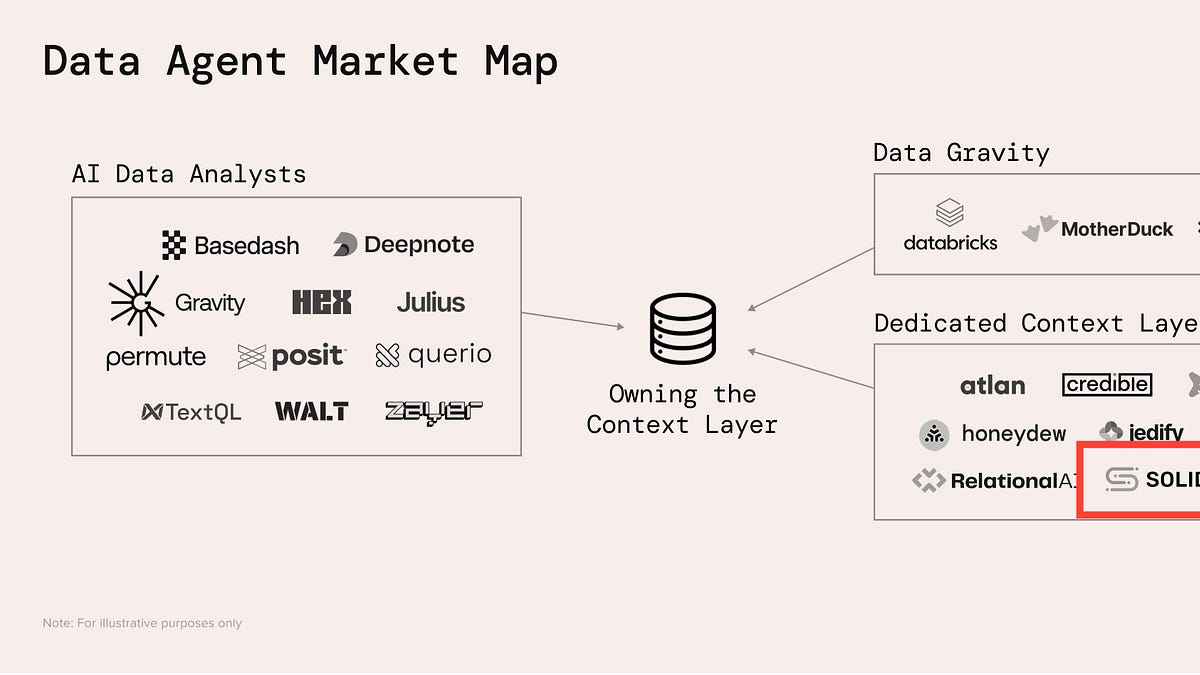

Companies are not coming to us because they woke up one morning and decided they need a semantic layer.

They’re coming because they want AI to do real work with their data, and they’re discovering that the missing piece is the context layer, and semantics, that makes their data understandable, governed, and reliable.

In other words: AI has created the urgency. Messy enterprise data has created the blocker.

The short version: everyone wants agents, but agents need trusted data

A year ago, most conversations about AI and data were still vague.

“We want to use GenAI.”

“We want chat with data.”

“We want business users to self-serve.”

“We want to reduce analyst bottlenecks.”

Those conversations still happen. But they’re getting sharper.

Today, the question is much more concrete:

How do we let an AI agent query our data, understand our metrics, use the right joins, respect our business definitions, and return something people actually trust?

That’s a very different conversation.

It’s no longer about a chatbot sitting on top of a warehouse and hoping for the best. We wrote about that early on in Data Chatbots: what people are really doing, and the reality hasn’t changed much. If the AI doesn’t understand the data, the chat experience breaks very quickly.

What has changed is that organizations are now trying to move beyond demos. They want AI agents and AI-powered workflows in production.

And production is much less forgiving than a demo.

Trend #1: “Chat with your data” is still the door opener

The most common entry point is still some version of “chat with your data.”

Business users want to ask questions in natural language. Data teams want to reduce ad-hoc requests. Executives want answers faster. Everyone wants the magic box where you type a question and get a reliable answer.

This is not surprising. Snowflake, Databricks, Microsoft, Google, and others are all pushing the market in this direction. Snowflake has Cortex Analyst, Databricks has Genie, and the broader AI ecosystem is converging around standards like MCP

The surprise is that “chat with data” is often not the end goal.

It’s the easiest way to describe the pain.

The real pain is that business users don’t know where the data is. Analysts are tired of being the routing layer between every business question and every database table. Data engineers are tired of maintaining fragile logic across dashboards, notebooks, semantic layers, and half-forgotten SQL files.

So the user says “chat with data,” but what they often mean is:

Please make our data understandable enough that humans and machines can use it without opening five Slack threads and asking the one analyst who has been here since 2017.

That’s a much bigger problem.

Trend #2: the analyst bottleneck is getting worse

Another strong pattern: data teams are overwhelmed.

Not “busy.” Overwhelmed.

In many organizations, analysts are still spending too much time finding the right table, reverse-engineering old dashboards, understanding metric definitions, and figuring out which version of a query is trusted.

We’ve written before that nobody cares about the efficiency of the data analyst. That headline was intentionally annoying, but the point was real: the business usually doesn’t buy “analyst efficiency” as the main outcome.

What they do care about is speed to decision.

What we’re seeing now is that the analyst bottleneck is becoming a business bottleneck. The business wants campaigns optimized faster. Sales wants better account signals. Finance wants cleaner performance views. Operations wants alerts that trigger action, not another dashboard to check.

The analyst is sitting in the middle of all of that.

So organizations come to us with a technical problem, but underneath it is a business problem: the demand for data work has exceeded the human capacity to fulfill it.

AI is supposed to help, but without a trusted semantic layer, it creates more review work, not less.

Trend #3: manual semantic modeling does not scale

This one comes up again and again.

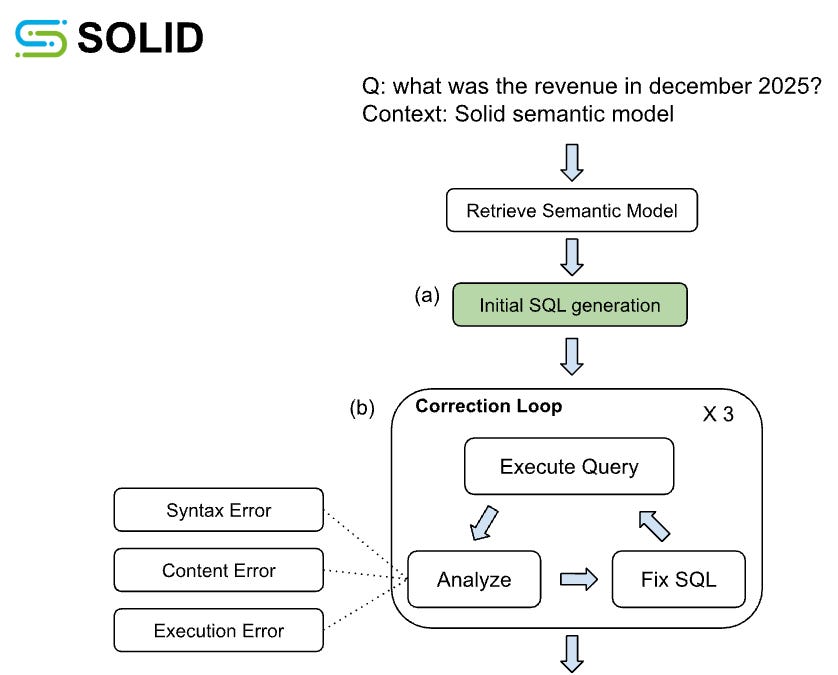

Enterprises understand the need for a semantic layer. They know that metrics, dimensions, relationships, definitions, and business logic need to be captured somewhere. They know that without this layer, Text2SQL and agents will hallucinate.

The issue is not awareness.

The issue is labor.

Manual semantic modeling is slow. Evaluation is slow. Maintenance is slower. Every new domain requires people to identify the right tables, understand the logic, define metrics, document relationships, test the model, and keep it current as the data changes.

That may work for a few high-value BI dashboards. It does not work for enterprise AI.

We wrote about this in Semantic layer for AI: let’s not make the same mistakes we did with data catalogs. The core mistake is believing humans will happily document and maintain everything if only the project is important enough.

They won’t.

Humans hate documenting data. They especially hate maintaining documentation they didn’t need in the first place.

So when organizations come to Solid, they’re often looking for a way to generate the semantic layer automatically, evaluate it automatically, and maintain it with much less manual effort.

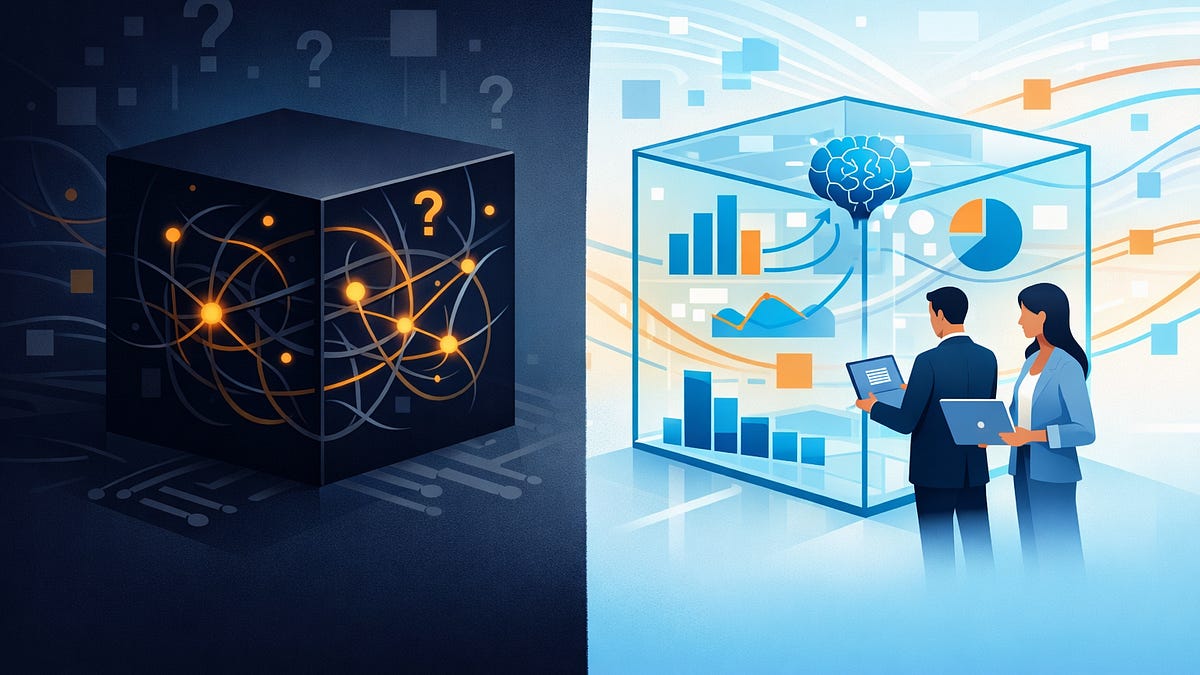

The important nuance: they don’t want a black box.

They want automation with control.

The model should be generated automatically, but reviewed by humans. Accuracy should be measured, not assumed. Fixes should be recommended, not hidden. The data team still owns the truth, but AI does the heavy lifting.

Trend #4: trust is becoming the real production blocker

Many organizations have already tried some version of “AI on top of data.”

The demo worked.

Then someone asked a slightly different question, and the answer was wrong.

Or the SQL looked plausible but used the wrong metric.

Or the agent joined tables in a creative way.

Or the business user got a number that didn’t match the dashboard.

That’s when the excitement turns into skepticism.

We wrote about this in Everyone wants Text2SQL, but the pros don’t trust it. The problem is not only whether AI can generate SQL. The problem is whether the people responsible for the data trust the generated SQL enough to put it in front of the business.

The market is now asking for evidence.

Not “our model is accurate.”

Not “the LLM is better now.”

Not “trust us.”

They want benchmarks. They want expected SQL. They want side-by-side validation. They want to know where the model failed, why it failed, and what needs to change.

This is a healthy shift.

AI in production requires measurable trust. Vibes are not enough.

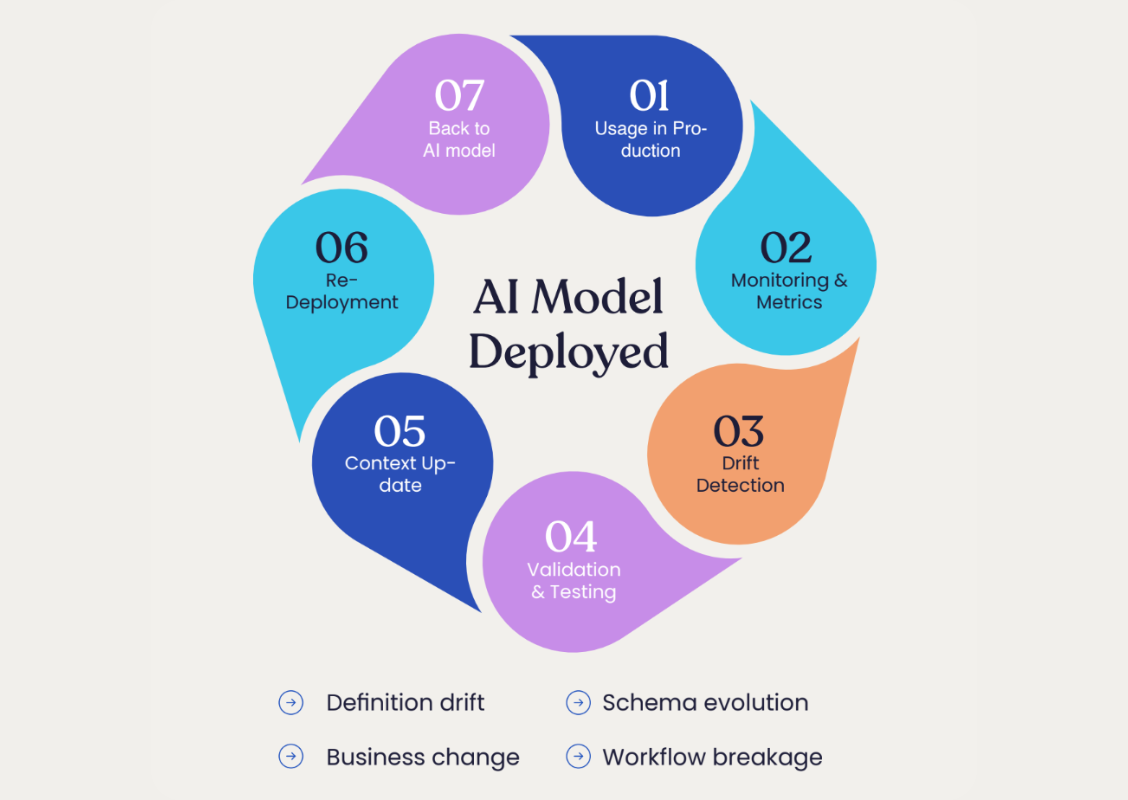

Trend #5: the use cases are becoming more operational

Another interesting shift: the use cases are moving from “answer my question” to “run my workflow.”

The early data chatbot dream was mostly analytical. A user asks a question. The system answers. Maybe it creates a chart.

Now we’re seeing more operational use cases:

An agent that analyzes account signals and recommends next actions.

An agent that reviews campaign performance and suggests changes.

An agent that helps prepare customer-facing teams before a meeting.

An agent that monitors operational issues and pulls the relevant context automatically.

An agent that turns business signals into prioritized workflows.

This matters because operational workflows raise the bar.

If an AI assistant gives you a wrong answer in a sandbox, that’s annoying.

If an AI agent takes the wrong action in a production workflow, that’s dangerous.

So the underlying data logic needs to be more governed, not less. The more autonomous the agent, the more important the semantic layer becomes.

The ROI from AI will not come from buying a generic assistant and hoping employees find uses for it. It will come from improving specific workflows.

That’s exactly what organizations are trying to do now.

Trend #6: customers want flexibility, not another locked-in layer

One theme I didn’t expect to be so strong: flexibility.

Organizations are very aware that the AI stack is changing quickly. Today they may be testing one agent framework. Tomorrow it may be another. One team may be using a cloud-native AI tool. Another may be experimenting with an orchestration platform. Another may want to export semantic models into dbt, Snowflake, Looker, or something else.

They do not want to rebuild their context layer every time the AI tool changes.

This is why the semantic layer needs to be decoupled.

It should serve many consumers: BI tools, AI agents, Text2SQL engines, internal apps, and whatever comes next. That’s also why MCP is interesting. Whether or not MCP becomes the standard, the direction is obvious: AI systems need a structured way to access tools and context.

The enterprise buyer is increasingly allergic to anything that feels like a closed semantic island.

They want one governed source of business logic that can travel.

Trend #7: the market is accepting that data will stay messy

This may be my favorite one.

For a long time, companies would start the conversation by apologizing for their data.

“Our data is a mess.”

“Our documentation is bad.”

“Our warehouse is complicated.”

“Our metric definitions are inconsistent.”

“We’re not ready yet.”

Recently, the conversation is changing.

Companies still know their data is messy, but they’re less interested in pretending they can clean everything before AI arrives.

Good.

We wrote about this in “Sorry for the mess” - everyone’s data is messy, and it’s Okay. The point is not that messiness is ideal. The point is that messiness is reality.

The winning approach is not to wait until the warehouse is perfect.

It’s to build AI that can learn from the messy signals that already exist: schemas, query logs, BI dashboards, documentation, tickets, Slack discussions, Confluence pages, metric definitions, and usage patterns.

That’s where the real organizational knowledge lives.

Not in one pristine document called “Final_Final_Official_Metric_Definitions_v7.”

What this means for the market

My takeaway from this qualitative analysis is simple:

The market has moved from curiosity to implementation.

In 2024 and early 2025, many companies were asking: “Can AI help with data?”

Now they’re asking: “What do we need to put in place so AI can safely work with our data?”

That’s a much better question.

It’s also a much harder one.

The answer is not just a better LLM. It’s not just a data catalog. It’s not just a BI tool with a chat box. It’s not just a manually built semantic layer.

It’s an AI-native context layer that can create, evaluate, expose, and maintain business semantics across the organization.

That sounds a bit buzzwordy, so let me say it more simply:

AI needs to know what your data means.

Not just what tables exist. Not just what columns are called. What the business means when it says revenue, active customer, churn risk, qualified lead, suspicious transaction, campaign performance, account health, or pipeline sufficiency.

And it needs to know that in a way that is testable, governable, and usable by agents.

The funny part: most companies don’t come asking for that

This is the part I find most interesting.

Very few organizations start the conversation by saying:

“We need an AI-native semantic layer that can be automatically generated, benchmarked, maintained, and exposed through MCP to downstream agents.”

No one talks like that. Thankfully.

They come with symptoms.

They want chat with data.

They want agents.

They want fewer ad-hoc requests.

They want faster dashboards.

They want to migrate off old BI.

They want better campaign analytics.

They want better sales signals.

They want to reduce manual analyst work.

They want to stop hallucinations.

They want to make AI useful.

Our job is to help connect those symptoms to the root cause.

The root cause is not that the LLM isn’t smart enough.

The root cause is that the organization’s data knowledge is scattered across systems, people, dashboards, SQL queries, documents, and tribal memory.

Solid’s job is to turn that scattered knowledge into something AI can actually use.

Urgency found the market

A few months ago I wrote that we found urgency, or maybe it found us

After looking at the last two months of conversations, I think that’s even more true now.

The urgency is not “semantic layers are cool again.”

The urgency is that enterprises want AI agents in production, and they’re realizing that agents without semantics are interns with database access.

Very enthusiastic. Very fast. Occasionally useful. Not someone you’d leave alone with important business decisions.

The next phase of enterprise AI will be less about demos and more about trust.

Less about asking questions and more about automating workflows.

Less about connecting AI to data and more about helping AI understand what the data means.

That’s the market pulse we’re seeing right now.

And honestly, it’s a pretty exciting one.

Other posts

%20(2).png)

.png)